Synthetic Data Generation for Novel Object Detection

I developed a custom synthetic data generation pipeline to improve novel object detection performance in low-data scenarios. The solution won the Purple NECtar X Innovation in Defence (PN x IID) 2025 challenge.

Key Features

- Utilize open-source generative models (QWEN Image / Edit)

- Optimized data flow for creating annotated objected detection datasets

- Trained custom object detection models (cross-domain few-shot object detection) using synthetic data only

- Deployed models on NVIDIA Jetson Orin for real-time inference on real-world scenarios

Technical Details

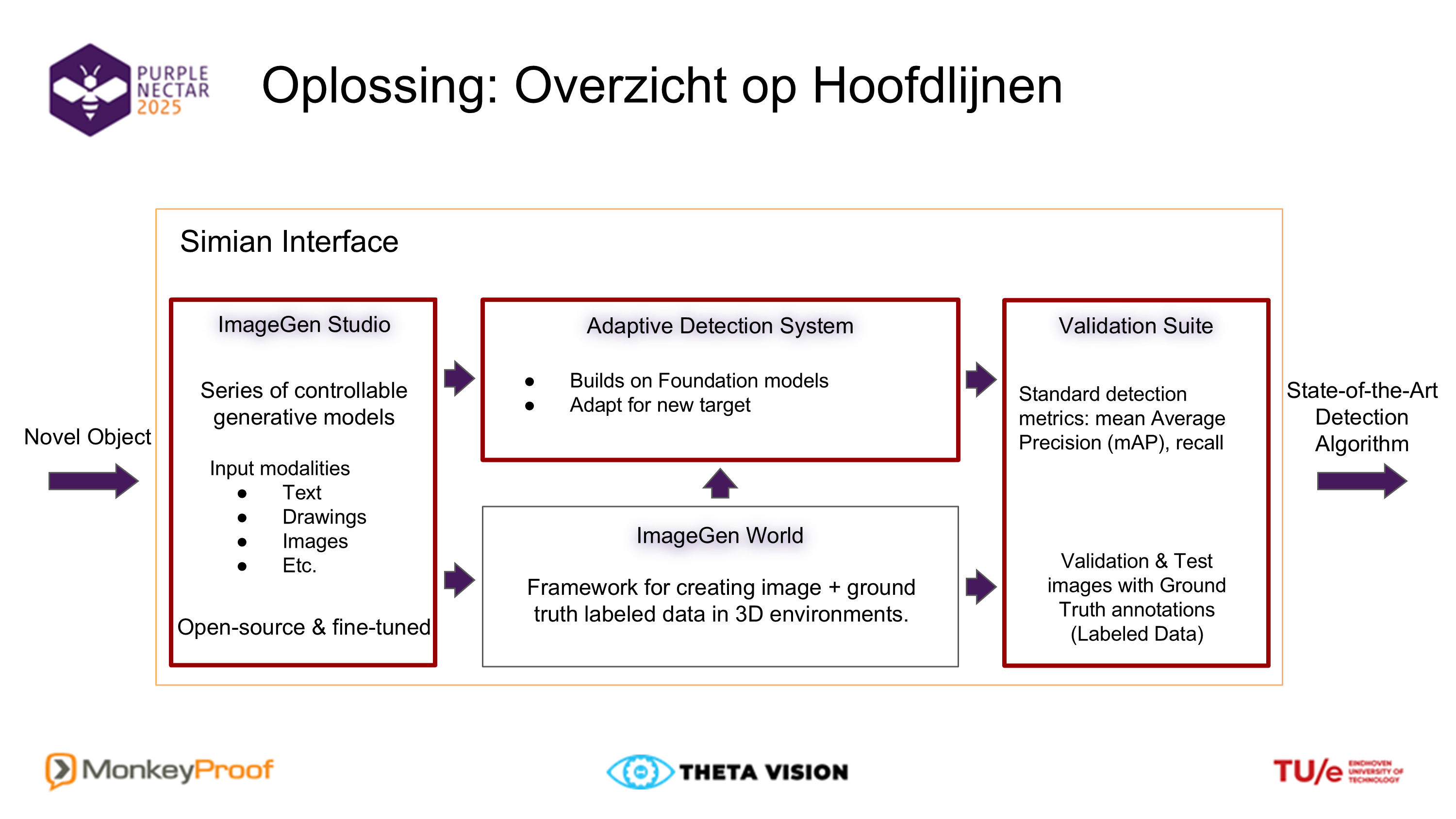

The synthetic data pipeline integrates open-source generative models, such as QWEN Image Edit, to synthesize diverse objects and scene variations and automatically generate high-quality bounding-box annotations. A modular data-flow architecture ensures efficient batch generation, post-processing, and dataset assembly tailored for few-shot, cross-domain object detection. Using only synthetic samples, I trained lightweight detection models optimized for transferability to real-world conditions and validated their performance on downstream tasks. The final models were containerized and deployed on NVIDIA Jetson Orin devices, achieving real-time inference throughput under constrained GPU and memory resources.

Foundation Model for Computed Tomography

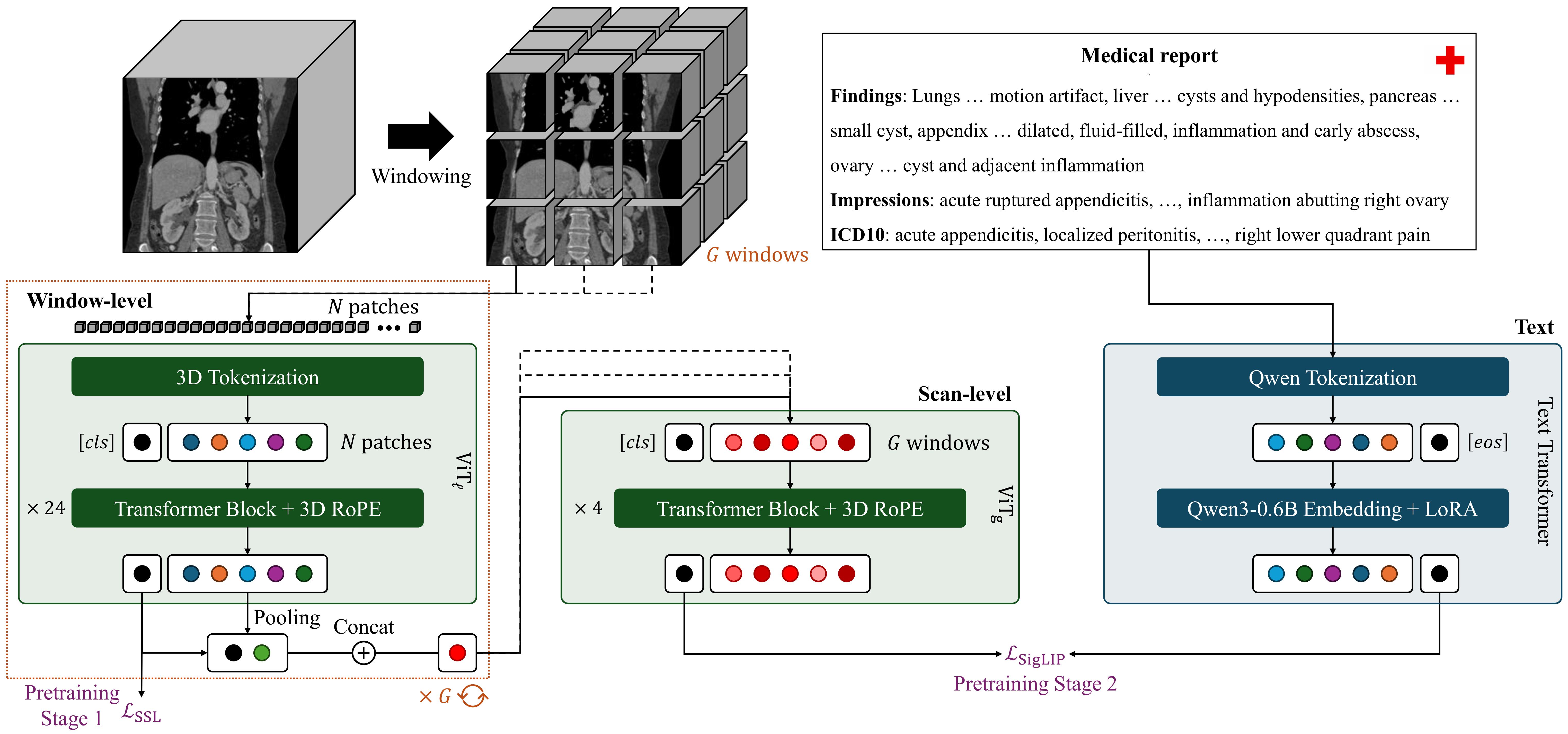

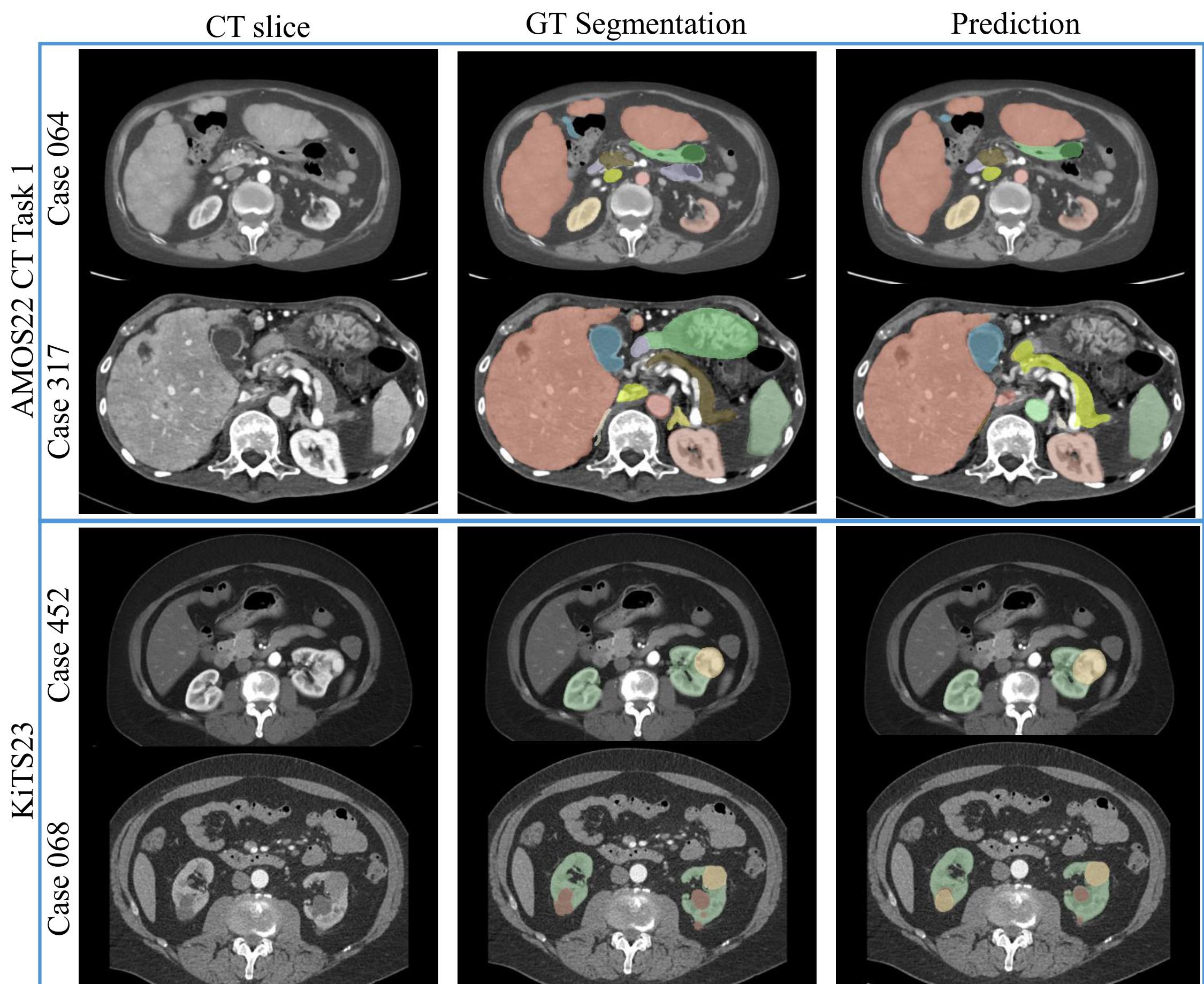

Cris Claessens and I developed SPECTRE, a fully transformer-based foundation model for volumetric computed tomography (CT). Our Self-Supervised & Cross-Modal Pretraining for CT Representation Extraction (SPECTRE) approach utilizes scalable 3D Vision Transformer architectures and modern self-supervised and vision–language pretraining strategies to learn general-purpose CT representations. Volumetric CT poses unique challenges, such as extreme token scaling, geometric anisotropy, and weak or noisy clinical supervision, that make standard transformer and contrastive learning recipes ineffective out of the box. The framework jointly optimizes a local transformer for high-resolution volumetric feature extraction and a global transformer for whole-scan context modeling, making large-scale 3D attention computationally tractable. Notably, SPECTRE is trained exclusively on openly available CT datasets, demonstrating that high-performing, generalizable representations can be achieved without relying on private data. Pretraining combines DINO-style self-distillation with SigLIP-based vision–language alignment using paired radiology reports, yielding features that are both geometrically consistent and clinically meaningful. Across multiple CT benchmarks, SPECTRE consistently outperforms prior CT foundation models in both zero-shot and fine-tuned settings, establishing SPECTRE as a scalable, open, and fully transformer-based foundation model for 3D medical imaging.

Key Features

- First fully transformer-based foundation model for volumetric CT

- Trained in a distributed fashion on NVidia DGX B200

- Combines DINO-style self-distillation with SigLIP-based vision–language alignment

- State-of-the-art performance on multiple CT benchmarks

Enhanced Computer Vision Methods for Cancer Detection and Precision Guidance in Medical Imaging

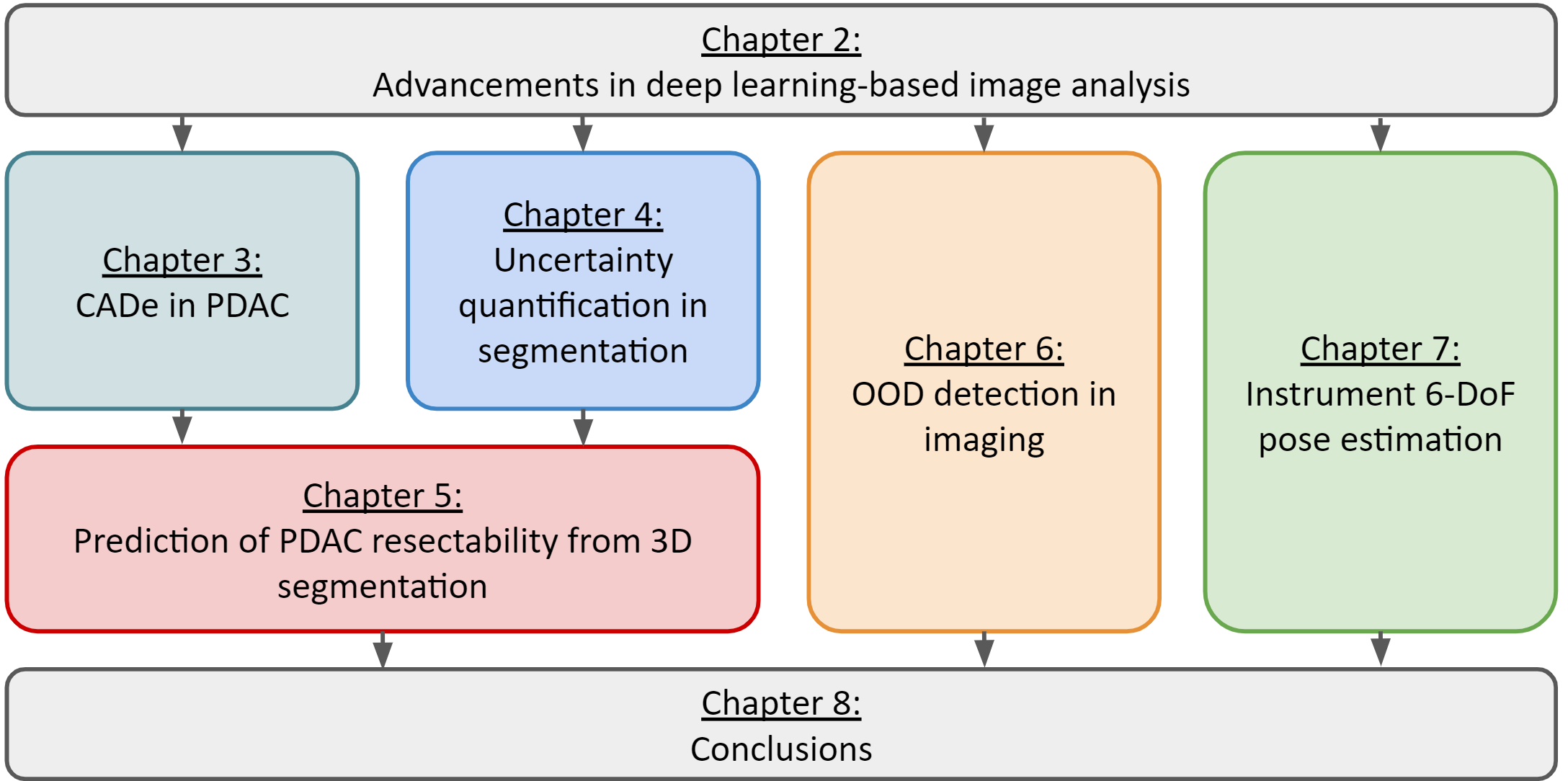

During my PhD I developed advanced computer vision and deep learning methods to enhance cancer detection, improve segmentation robustness, quantify uncertainty, detect out-of-distribution data, and enable precise image-guided interventions across diverse medical imaging modalities. The thesis presents novel CADe systems for early pancreatic cancer detection using clinically meaningful secondary features; introduces improved probabilistic segmentation models using Normalizing Flows for reliable aleatoric uncertainty quantification; proposes an integrated framework for predicting pancreatic tumor resectability; advances OOD detection through wavelet-based and generative models; and delivers a general-purpose, real-time 6-DoF pose estimation method for X-ray–guided minimally invasive surgery. Collectively, these contributions push the boundaries of reliable, data-driven diagnostic and interventional support in modern medical imaging

Key Features

- Clinically informed early PDAC detection

- Probabilistic segmentation with explicit uncertainty modeling

- A reliable framework for predicting tumor resectability

- Novel semantic and covariate OOD detection methodologies

- A unified 6-DoF pose estimation model for image-guided surgery

Skills obtained

The PhD equipped me with a deep interdisciplinary skill set spanning advanced machine learning, medical imaging, and scientific research. I gained expertise in developing and evaluating complex deep learning models - including segmentation networks, probabilistic architectures, and generative models - for applications in uncertainty quantification, detecting out-of-distribution data, and designing real-time pose estimation systems. I built end-to-end pipelines for CT, MRI, X-ray, and RGB data, translated clinical knowledge into model features, handled large-scale datasets, and engineered reliable systems suitable for clinical environments. Along the way, I strengthened my abilities in experimental design, statistical analysis, scientific writing, and cross-disciplinary collaboration across technical and medical domains.